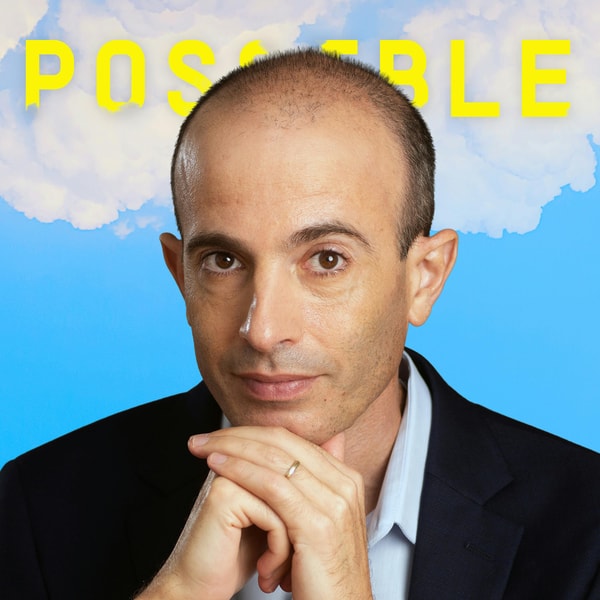

Yuval Noah Harari on trust, the dangers of AI, power, and revolutions

Possible

2025/06/04

Yuval Noah Harari on trust, the dangers of AI, power, and revolutions

Yuval Noah Harari on trust, the dangers of AI, power, and revolutions

Possible

2025/06/04

In this episode of the podcast *Possible*, hosts Reid Hoffman and Aria Finger engage in a thought-provoking conversation with historian Yuval Noah Harari about the future of artificial intelligence and its implications for trust, consciousness, and global society. The discussion explores how AI might reshape human civilization, drawing comparisons to past technological revolutions like writing and industrialization. While differing perspectives emerge on the potential of AI, there is agreement on the need to guide its development with ethical considerations and self-correcting mechanisms.

The conversation centers on the transformative power of AI and the challenges it poses to human society. Harari compares AI’s potential impact to that of writing, suggesting it could become a cosmic-scale force within a century. The speakers reflect on historical precedents, such as the Industrial Revolution, to highlight the risks of poor adaptation, including unemployment, inequality, and authoritarianism. They stress the importance of building trustworthy AI that aligns with human values, emphasizing the role of compassion, truth-seeking, and global cooperation. The distinction between intelligence and consciousness is explored, with skepticism around whether AI can ever truly be conscious or morally reliable. The discussion also touches on using AI to enhance democratic processes and rebuild institutional trust, while cautioning against cynicism and unchecked power dynamics in AI development.

00:00

00:00

AI is a transformative tool with significant risks and rewards.

03:58

03:58

Consciousness is the capacity to suffer and feel joy, central to ethics.

10:37

10:37

AI is the most significant invention.

17:11

17:11

If humanity handles AI like the Industrial Revolution, billions may pay a high price

20:19

20:19

A key question is whether AI can be used to speed up these mechanisms.

24:38

24:38

AI cannot be fully trusted to verify itself due to inherent biases and lack of transparency.

26:51

26:51

AIs learn from human behavior, so if humans are untrustworthy, it's impossible to create trustworthy AI.

35:00

35:00

AI may learn harmful behaviors from real-world figures like Elon Musk and Donald Trump

39:11

39:11

Most AI revolution leaders recognize the risks but are reluctant to slow down due to competition.

41:30

41:30

AI lacks consciousness and the capacity to suffer

42:35

42:35

A super-intelligent AI without consciousness may not pursue truth

48:25

48:25

We now have the understanding and resources to address modern issues like nuclear war, climate change, and the AI revolution, but need motivation and trust.